The question used to be simple: which AI model is the smartest? In 2026, that question is the wrong one to ask.

With Claude Opus 4.7, GPT-5.5, Gemini 3.1 Pro, Claude Sonnet 4.6, Grok 4, and the restricted-access Claude Mythos all competing at the frontier, the gap between the top models on general benchmarks has narrowed to single digits. What hasn’t narrowed is how differently they perform on the specific tasks that actually matter to your work.

The era of model loyalty is over. The era of role-based model selection is here.

This guide cuts through the benchmark noise and gives you a practical answer to a practical question: given what you do every day, which model should you be using right now?

The 2026 Frontier at a Glance

Before diving into roles, here’s where the major publicly available models stand as of April 2026:

| Model | Provider | SWE-bench Verified | GPQA Diamond | Input $/1M | Availability |

|---|---|---|---|---|---|

| Claude Mythos | Anthropic | 93.9% | 94.6% | — | Restricted |

| GPT-5.5 | OpenAI | 88.7% | — | $5.00 | ChatGPT |

| Claude Opus 4.7 | Anthropic | 87.6% | — | $5.00 | API + Claude.ai |

| Gemini 3.1 Pro | — | 94.3% | $2.00 | API + Gemini App | |

| Claude Sonnet 4.6 | Anthropic | 79.6% | — | $3.00 | API + Claude.ai |

One model is not clearly dominant across all roles. That’s the whole point.

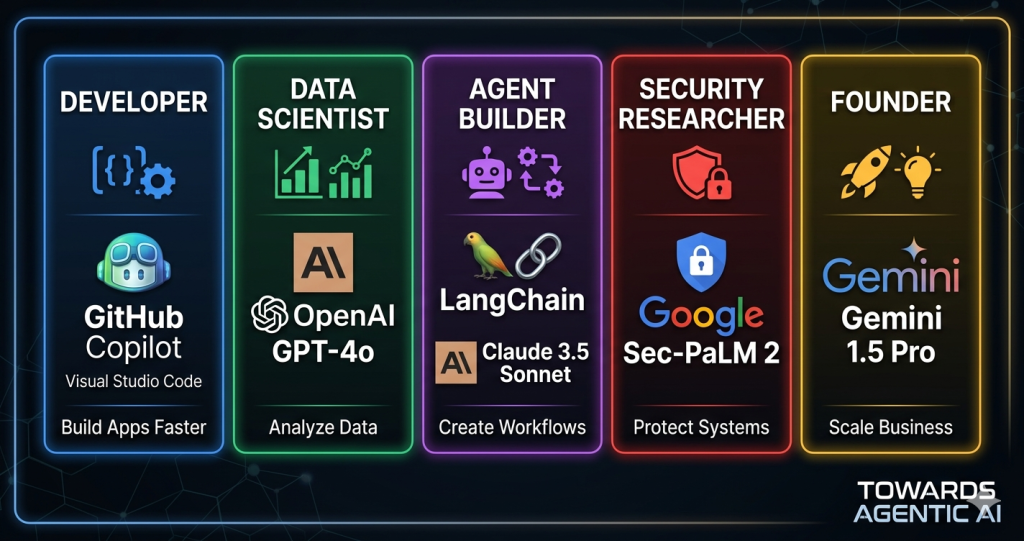

Role 1: The Software Developer

Recommended model: Claude Opus 4.7

If your day involves writing, reviewing, debugging, or refactoring code — especially across large, interconnected codebases — Claude Opus 4.7 is the model built for your workflow.

Released on April 16, 2026, Opus 4.7 scores 87.6% on SWE-bench Verified and leads the field on SWE-bench Pro at 64.3%, the harder variant that tests real-world issue resolution across complex repositories. GPT-5.5 scores 88.7% on Verified but trails at 58.6% on Pro — a meaningful gap when your codebase is large and architectural reasoning matters more than isolated file edits.

Beyond benchmarks, Opus 4.7 powers Cursor and Windsurf, the two most widely adopted AI coding editors in 2026. That’s not a coincidence — it reflects how the model performs on the tasks developers actually care about: understanding intent across a full codebase, proposing refactors that don’t break downstream dependencies, and generating production-ready code on the first attempt.

One practical note: GPT-5.5 uses 72% fewer output tokens than Opus 4.7 on equivalent coding tasks. If your workload is high-volume and latency-sensitive, GPT-5.5 may reduce costs — but you’ll trade architectural reasoning depth for token efficiency. For most developers, the quality of Opus 4.7’s output on complex tasks justifies the cost difference.

When to switch: Use GPT-5.5 for precise tool use, file navigation tasks, or if API cost is a hard constraint.

Role 2: The Data Scientist

Recommended model: Gemini 3.1 Pro

Data science sits at the intersection of statistics, domain reasoning, and code — and Gemini 3.1 Pro was built for exactly this intersection.

Released on February 19, 2026, Gemini 3.1 Pro leads on GPQA Diamond at 94.3% — expert-level questions in physics, chemistry, and biology — and scores 77.1% on ARC-AGI-2, more than double what Gemini 3 Pro achieved just three months earlier. These aren’t synthetic benchmarks. They reflect the kind of multi-disciplinary reasoning that data scientists rely on when interpreting results, designing experiments, and explaining findings.

The 1 million token context window is particularly relevant for data scientists. You can pass an entire dataset schema, a long analytical notebook, or multiple research papers in a single prompt — and Gemini 3.1 Pro processes them coherently.

Pricing is also compelling for data workloads: at $2 per million input tokens (vs $5 for Claude Opus 4.7 or GPT-5.5), Gemini 3.1 Pro is the most cost-effective frontier model for data-intensive contexts that require large amounts of input.

Complement with: Claude Sonnet 4.6 for generating and debugging data pipeline code, and Perplexity for citation-backed research.

Role 3: The AI Agent Builder

Recommended model: Claude Sonnet 4.6

If you are building multi-step autonomous agents — systems that plan, use tools, navigate interfaces, and execute workflows end-to-end — Claude Sonnet 4.6 is the model that was purpose-built for this job.

Launched February 17, 2026, Sonnet 4.6 scores 79.6% on SWE-bench and 72.5% on OSWorld — the computer use benchmark that measures how well a model can navigate GUIs, fill forms, and coordinate across multiple browser tabs. That 72.5% is 34 percentage points ahead of GPT-5.4 on the same benchmark. No other publicly available model comes close on GUI-based agentic tasks.

The practical implications are significant. Sonnet 4.6 can:

- Break a broad user goal into executable subtasks

- Navigate complex multi-step web forms

- Coordinate across browser tabs and desktop applications

- Maintain coherent state across long agent sessions with its 1M token context window (beta)

In April 2026, Anthropic launched Claude Managed Agents — infrastructure that lets you offload the agent harness entirely to Anthropic, rather than spending weeks building your own. This is a significant signal: Anthropic is positioning Sonnet 4.6 not just as a capable model but as the foundation of a complete agent platform.

Enterprise adoption confirms this. Rakuten, CRED, TELUS, and Zapier have all deployed multi-agent coordination systems built on Claude. If you are building agents for production, this is where the tooling ecosystem is most mature.

When to use Opus 4.7 instead: When your agent’s primary task is deep code reasoning or complex software engineering, step up to Opus 4.7 for the heavier cognitive work.

Role 4: The Security Researcher

Recommended model: Claude Mythos (if you can get access)

Claude Mythos is the most capable AI model ever benchmarked — 93.9% on SWE-bench Verified, 94.6% on GPQA Diamond, and independently identified thousands of zero-day vulnerabilities before Anthropic restricted its release.

The restriction is intentional. Anthropic classified Mythos as a strategic defensive asset and limited access to approximately 50 vetted organizations through Project Glasswing — a partnership that includes government agencies and select cybersecurity firms. Sam Altman publicly called this “fear-based marketing,” but the benchmarks make the caution understandable.

For security professionals who cannot access Mythos, the next best option is Claude Opus 4.7 — which still leads the publicly available field on complex reasoning tasks and has strong capability for threat modelling, vulnerability analysis, and security code review.

Role 5: The Founder or Builder

Recommended model: GPT-5.5

If you are a founder, product manager, or generalist builder who needs a single capable model for a wide range of tasks — writing, analysis, customer support automation, light coding, workflow design — GPT-5.5 is the most practical choice right now.

Released on April 23, 2026, GPT-5.5 (“Spud”) is OpenAI’s most capable and broadly accessible model to date. It is available today to all ChatGPT Plus, Pro, Business, and Enterprise users, making it the easiest frontier model to get into the hands of a non-technical team without API setup.

Key strengths for founders and builders:

- Broadly capable — strong across writing, analysis, coding, and multi-step task execution

- ChatGPT integration — immediately usable without API credentials or infrastructure

- Customer-facing use cases — well-suited for customer support automation, lead qualification, and sales agent workflows

- Agentic execution — capability gains are strongest in agentic coding and computer use per OpenAI’s own release notes

One important caveat: as of April 24, 2026, GPT-5.5 API access is not yet available — OpenAI says it’s “coming very soon.” If your team requires API integration today, Claude Opus 4.7 or Claude Sonnet 4.6 are the better options while you wait.

The Role-to-Model Decision Framework

Stop asking “which model is best?” Start asking “best for what?”

Cost Reality Check

The right model for your role is one thing. The right model for your budget is another. Here’s what the frontier actually costs in April 2026:

| Model | Input $/1M | Output $/1M | Cache Savings | Best Cost Scenario |

|---|---|---|---|---|

| Gemini 3.1 Pro | $2.00 | $12.00 | Yes | Large input contexts, data-heavy workloads |

| Claude Sonnet 4.6 | $3.00 | $15.00 | Up to 90% | High-volume agentic pipelines with caching |

| Claude Opus 4.7 | $5.00 | $25.00 | Yes | Complex coding tasks where quality matters |

| GPT-5.5 | $5.00 | $30.00 | — | Token-efficient tasks (72% fewer output tokens) |

The cost picture favors Gemini 3.1 Pro for data-intensive work and Claude Sonnet 4.6 for high-volume agentic pipelines, where the 90% prompt caching discount dramatically reduces real-world spend.

The Honest Summary

The debate about which model is “the best” in 2026 is a distraction. Here’s the honest summary:

- You write code all day → Claude Opus 4.7. It understands your codebase, not just the file you’re looking at.

- You analyze data and do research → Gemini 3.1 Pro. The science benchmarks and context window are unmatched at the price.

- You build autonomous agents → Claude Sonnet 4.6. The agentic infrastructure and computer use scores make it the clear choice.

- You do security research → Mythos if you can get it, Opus 4.7 if you can’t.

- You run a company or build products → GPT-5.5 via ChatGPT, today, without API setup friction.

The underlying shift is more important than any single recommendation: frontier AI is now specialized. The models that will define the next 12 months are not general-purpose assistants — they are domain-optimized tools. Your job is not to pick the smartest model. It is to pick the right tool for your specific job.

The teams that figure this out early will move faster than those still debating benchmark leaderboards.

References:

- GPT-5.5 vs Claude Opus 4.7: Benchmarks & Coding Compared — llm-stats.com

- Gemini 3.1 Pro: Google’s Most Advanced AI Model 2026 — Google Blog

- Claude Sonnet 4.6: Features, Access, Tests, and Benchmarks — DataCamp

- AI Models to Watch in 2026 — ProDevs

- What is Mythos and why are experts worried — Scientific American

- OpenAI announces GPT-5.5 — CNBC

About the Author

Aqil Khan is an Agentic AI Engineer and Data Governance & Analytics Consultant specializing in building data pipelines and autonomous AI systems. He writes about the frontier of AI coding assistants, agentic workflows, and intelligent data systems at Towards Agentic AI.